AI Powered 3D Vision System for Robotic Pick&Place Applications

Capturing Speed

VCV-Cortex is an AI powered stereovision system developed for robotic applications. It works with any camera and optical system to provide the desired range, FoV and precision. Unlike structured light cameras, it can work on dark and/or shiny objects at high speed.

VCV-Cortex Pose estimation viewer. Showing a bin full of dark shiny rivets. The found rivet is highlighted in green and its 6D pose shown on the bottom left corner. Being a pre-trained AI system, it needs less than one minute to learn the geometry of any new part. (Bild: Vancouver Computer Vision Ltd.)

Stereovision is an imitation of human visual system and is one of the first attempts at building a 3D vision system for robots and machines. However, traditional algorithms have very low accuracy and break down facing texture-less surfaces. As a result, active systems, specially structured light cameras, are nowadays the standard 3D vision systems of the industrial world. Structured light cameras are perfect for metrology and quality inspection. However, for lack of a better solution, they are also being fitted into robotic pick&place applications where they leave a lot to be desired; the process of casting the light pattern and capturing can be time consuming. The same process prevents them from capturing dark and/or shiny objects in one shot. They require HDR imaging and that means more wasted time. They cannot work next to each other because of light projection cross talk. They can be blinded by an intense light like that of welding or the sun. They have low resolution and offer limited FoV and range. As a result of the above issues, industries still prefer to use humans or 2D vision to solve manufacturing challenges.

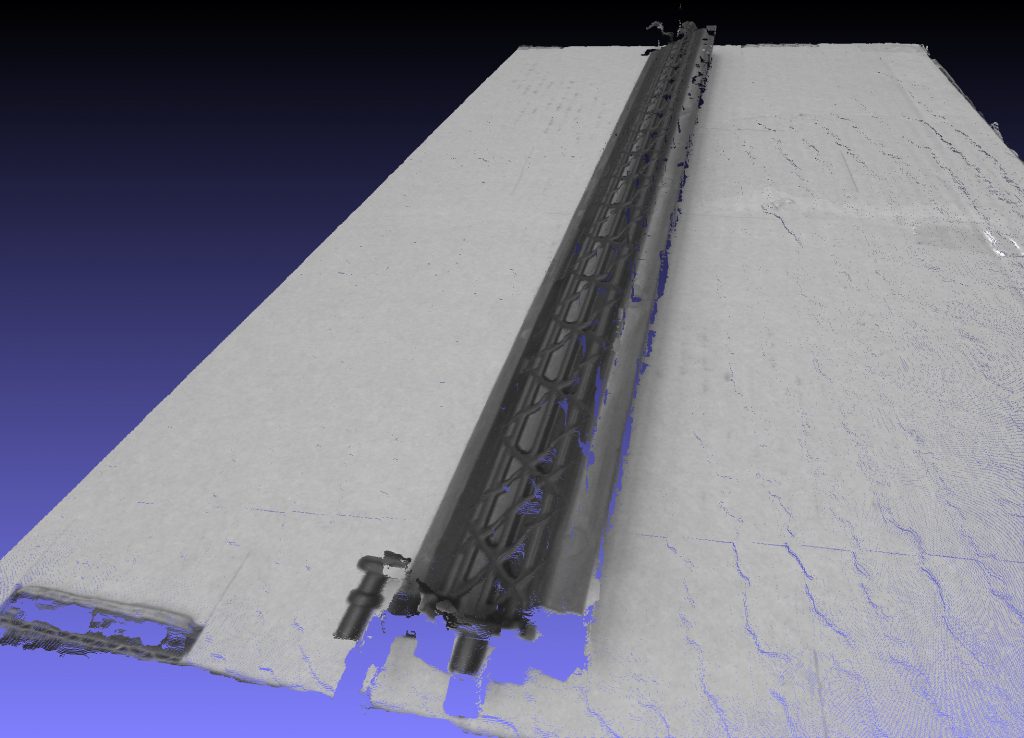

Point cloud of a dark and shiny PVC part sitting on top of a cardboard box: Top generated by structured light system, bottom created by VCV-Cortex. (Bild: Computer Vision Ltd.)

Scene Capture in ms

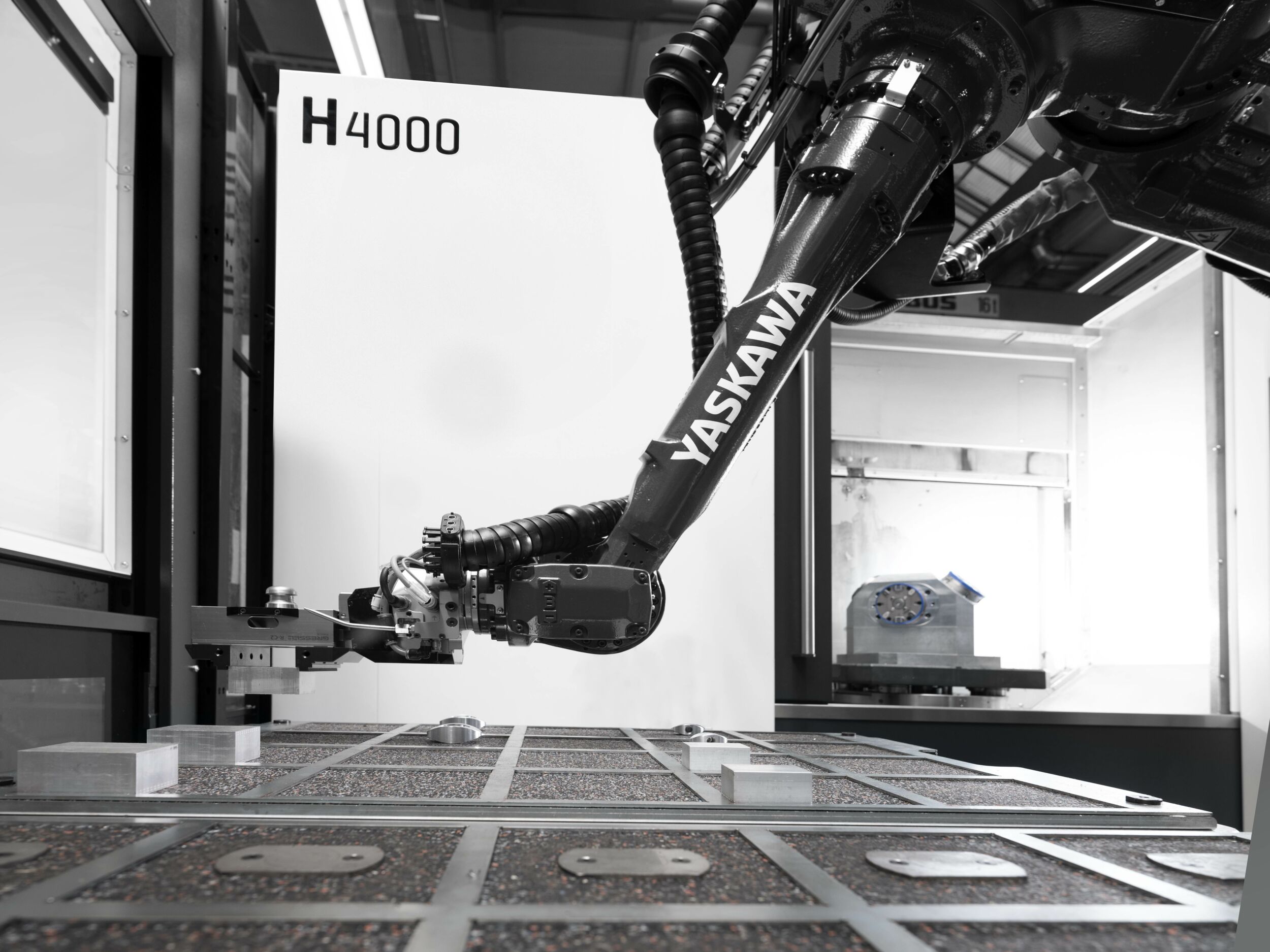

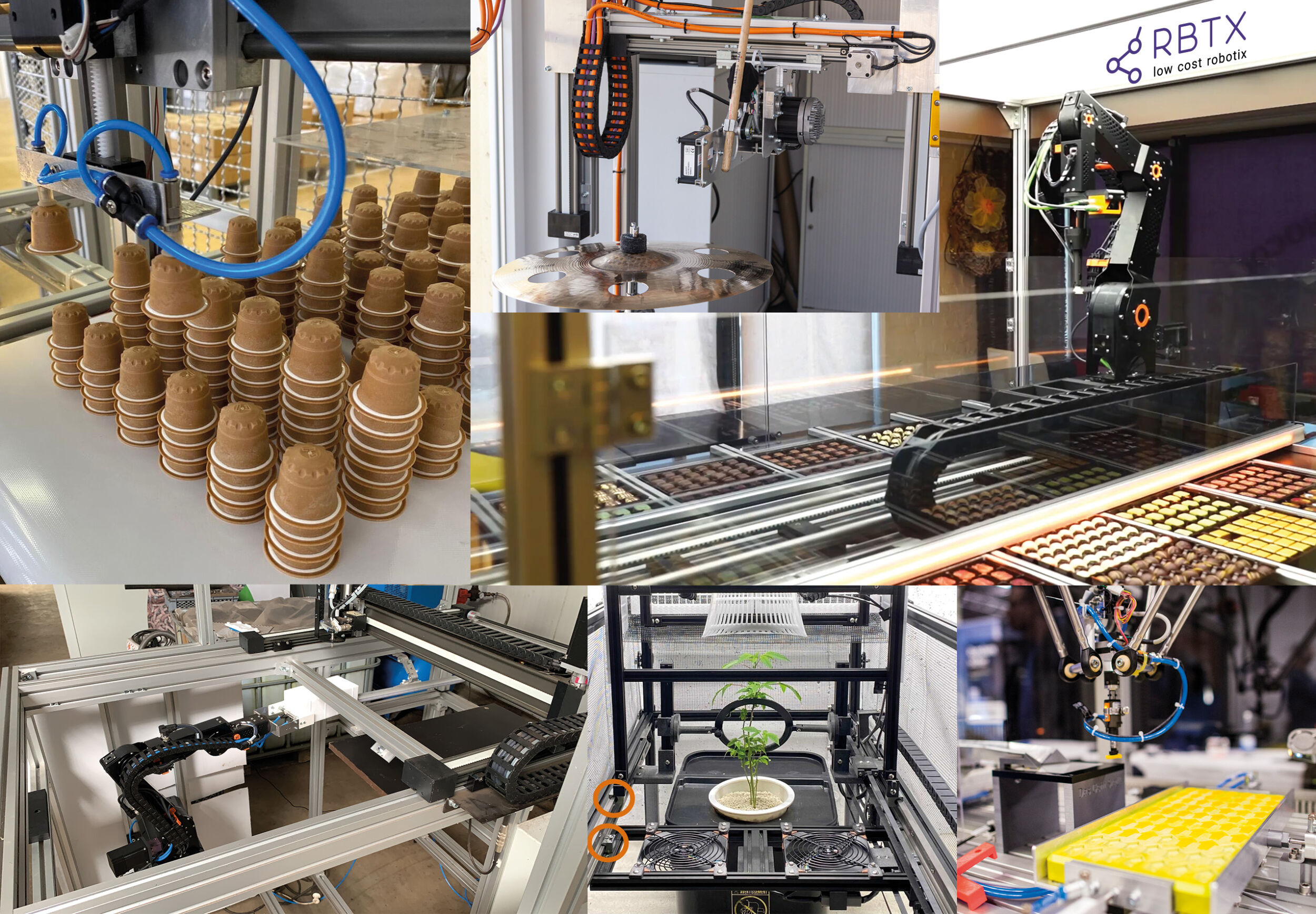

Unlike structured light cameras, VCV-Cortex has been specifically developed for robotic pick&place applications. It is an AI powered stereovision system without the shortcomings of traditional systems. The system offers a fully integrated pipeline for capturing the scene, finding the desired object and calculating its 6D pose. Being a pre-trained AI, it needs less than one minute to learn the geometry of any new object using its 3D model to find it in the scene. It can use any combination of off-the-shelf 2D cameras and lenses to offer the desired resolution, range, FoV and precision. The capture time is only limited by the camera and available light in the scene and thus works on moving, static, shiny and dark objects alike. It is a passive system and thus is immune to interference by intense light from the sun or welding or a similar vision system (cross-talking). Perhaps the most important advantage is its capturing speed. Relying on 2D cameras, it requires milliseconds to capture the scene during which the robotic arm needs to be out of the way. Rest of the processing will happen on the hardware resulting in larger overlap of vision and robotic cycle and overall significantly shorter pick and place cycle time. Regardless of the above, the current version of VCV-Cortex can offer a full vision cycle (capture, object recognition and pose estimation) under two seconds. The system comes preinstalled on an industrial embedded box and can be combined with a custom imaging system or obtained as one of the following preconfigured systems:

- • VCV-Cortex M: 5MP camera, up to 800mm range, 560x470mm FoV

- • VCV-Cortex L: 12MP camera, up to 1.500mm range, 1,760×1,300mm FoV

User can choose one of the above mentioned systems or design a completely different imaging system based on task requirements. Cameras are installed at the desirable location and are calibrated in less than a minute using VCV-Cortex calibration module and pad. 3D model of the desired object is uploaded to the system and the system is ready to find the object in the scene and provide its 6D pose to robot’s PLC.

Vision cycle less than 2s

In a customer challenge, injection molding machines produced four parts every 12s. The parts were dropped randomly on top of each other on a work table of 800x600mm effectively creating a 3D bin-sorting environment from which the robot was supposed to pick the parts and sort them into different bins. Given the speed of production, a full vision cycle of less than 2s was required. Large FoV and dark and shiny finish of the parts made it impossible for existing structured light cameras to achieve the time requirements. Using VCV-Cortex L, the manufacturing company was able to achieve a full cycle time of 1.7s which enabled them to relax some of their requirements for the type of robot they wanted to use and automate a physically demanding tedious job and save significantly on their costs.